- #Is the new f5 vpn client better manual#

- #Is the new f5 vpn client better portable#

- #Is the new f5 vpn client better free#

But what it’s really done is shove the issue to the client side, making the app entirely responsible for the traffic patterns that emerge.

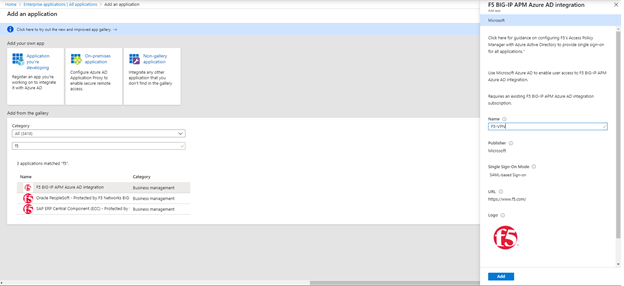

Some developers would say, all things being random, that this scheme would resolve the whole load balancing issue. Imagine a phone app making a similar decision, given a half-dozen or so IP addresses, about which one is most responsive. If you’ve ever waited in line in a post office along with dozens of people and six active clerks, you know that the length of each line is never a good indicator about the time you may spend there. Your phone may be making decisions about which IP to talk to, based on its assessment of traffic. Without that proxy in the middle, there’s really not much load balancing.”īelieve it or not, MacVittie said to my astonishment, many phone and tablet apps today actually delegate the logic for which IP address to which it addresses its requests, to the client-side app. Once you add a second instance of a container,” explained MacVittie, “something has to provide the endpoint to load-balance across. “Let’s say you have an API, and it’s got one server handling it. Rather than attempt to usurp Kubernetes or Mesos, the BIG-IP system works with orchestrators and schedulers. This way, traffic may be monitored and governed in real-time, and scalability can take place in a manner that’s more sensitive to what the incoming requests are, as well as how many. It creates a proxy checkpoint that represents the entire microservices conglomerate as a single entity, for any service or other application attempting to communicate with it. The purpose there is to enable security and firewall policies that also govern access to an application on-premises, to one that’s deployed on a cloud platform, including the public cloud.Ĭontainer Connector, announced at the same time but generally released Friday, extends that same premise to microservices. That system began with Application Connector, a component which links cloud-based applications to F5’s Big-IP application delivery controller.

MacVittie’s discussion with us came by way of F5’s introduction Friday of a component called Container Connector, to a system it started building last November for integrating microservices with existing networks. It has to hook into the environment and work as part of the system.” Checkpoint Charlie

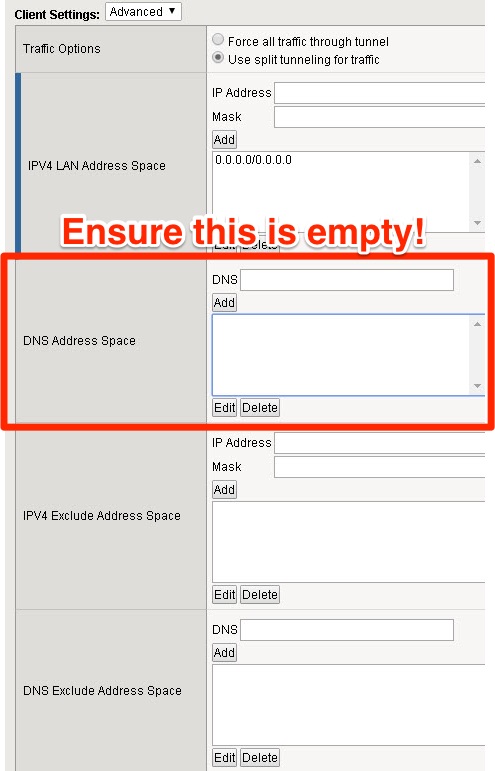

#Is the new f5 vpn client better manual#

Something has to provide that on the front end, to say, ‘Hey, I’m your application, and I’ll take care of scaling on the back end.’ No matter what that solution is, it has to have a way to be automatically updated with the right information, because manual processes aren’t going to work here. “A virtual service, a virtual IP, a virtual server, fronting that - scaled by multiple versions of that service or application within the cluster. “In a cluster, you have to have something that provides the endpoint for clients to connect to,” remarked Lori MacVittie, F5 Networks’ Technical Evangelist, speaking with The New Stack. And if a distributed systems application is, as they say, a network, that’s a problem. Making room for itself on the same side of the stage is the equally valid argument that you can’t secure a network you don’t understand.

On one side of the stage is the argument that serverless development, where the developer never sees or cares about the underlying infrastructure, is truly cloud-native.

#Is the new f5 vpn client better portable#

With containerization hoisting both portable deployment and distributed systems into the same spotlight, the result may be a clash of mindsets.

#Is the new f5 vpn client better free#

Originally, those layers were supposed to free the applications developer, way up on Layer 7 of the OSI stack, from having to mess about with all the dirty, infrastructural affairs of Layer 2 or 3. Yet there are too many technologies intentionally developed to bolster the layers of abstraction between applications and networks. We try to portray it as a blending of responsibilities and job functions, especially when we’re trying to sell a product to two customer bases simultaneously: DevOps, the merger of development and automation.